This is part 7 of my series on metadata for scanned pictures.

Part 1: The Scanned Image Metadata Project

Part 2: Standards, Guidelines, and ExifTool

Part 3: Dealing with Timestamps

Part 4: My Approach

Part 5: Viewing What I Wrote

Part 6: The Metadata Removal Problem

Part 7: Thoughts after 4000+ Scans (this post)

In January 2022, I explained in parts 1-4 of this series why and how I store metadata in image files containing scans of, e.g., 35mm slides. I failed to mention that the strategy I came up with was largely based on merely thinking about the problem. At the time, I had made relatively few scans. However, I had contracted with Chris Harmon at Scan-Slides to scan several thousand old slides, so I had to specify how the metadata was to be handled. The approach I developed was what I directed Scan-Slides to do.

In the 18 months since then, I've received the image files from Chris, worked with the metadata, and added scans of a few other types of objects (e.g., drawings, notes, and letters). My perspective on image file metadata is now based not just on thinking, but also on experience. It's a good time to reflect on how well my metadata strategy has held up.

Metadata Entry

One

thing has held up very well: the decision to have Scan-Slides do the

initial metadata entry. It was clear from the get-go that this was going

to be demanding work. It required deciphering hand-written descriptive

information on slide trays and slide frames and, for each scan, entering the information into the

appropriate metadata fields in the proper format. Several slide sets were from overseas trips, so many

descriptions refer to locations in or people from places that look,

well, foreign, e.g., "Church at Ste Mere Eglise" and "Sabine &

Sylvie - Fahrradreise in Langeland."

Copying the "when developed"

date off slide frames proved unexpectedly challenging. Although many dates were

clearly printed, some were debossed rather than printed, and those

were harder to read. Some timestamps were mis-aligned with the slide

frames they were printed on, leading to dates that were partially

missing, e.g., the lower half of the text or its left or right side was not present. Some

frames had no development date on them, but it was hard to

distinguish that from faint timestamp ink or debossed dates that

were only slightly indented.

Chris handled description and development

date issues with patience and equanimity. His accuracy was impressive.

There were some errors, but that's to be expected when you're copying

descriptions from thousands of slides involving places, people, and

languages you don't know. I don't believe anyone would have done better.

It's something of a miracle that he was willing

to do the metadata entry at all. Of the half-dozen slide scanning

services I contacted, he was the only one who didn't reject it out of hand. There was an extra fee for the work, of course, but it

was money well spent. It allowed me to devote my energies to aspects of

the project that only I could do, e.g., correcting metadata errors and organizing image sets.

Four Metadata Fields

Using only four metadata fields (Description, When Taken, When Scanned, and Copyright) has proven sufficient for my needs. Populating those fields hasn't

been burdensome. I feel like I got this part of metadata storage right.

The "When Taken" Problem

Using the Description metadata fields to store definitive information about when a picture was taken and storing an approximation of that in the "when taken" metadata fields has worked acceptably, but this remains a thorny issue. I still think it's prudent to follow convention and assign unknown timestamp components the earliest permissible value, but I continue to find it counterintuitive that images with less precise timestamps chronologically precede images with more accurate information.

The vaguer the "when taken" data, the less satisfying the "use the earliest permissible timestamp values" approach. Google Photos--one of the most important programs I use with digital images--insists on sorting photo libraries chronologically, so there's no escaping the tyranny of "when taken" timestamps. (Albums in Google Photos have sorting options other than chronological, but for a complete photo library, sort-by-date is the only choice.) For images lacking "when taken" metadata, Google Photos uses the file creation date. This is typically off by decades for scans of my slides and photos, so I've found that omitting "when taken" metadata is worse than putting in even a poor approximation.

Overall, my "put the truth in the Description fields (where you know programs will ignore it) and an approximation in the 'when taken' fields (where you know programs will treat it as gospel)" approach is far from satisfying, but I don't know of a better one. If you do, please let me know.

Making Image File Metadata Standalone

I originally believed I was storing all image metadata in image files, but I was mistaken. Inadvertently, I stored some of the metadata in the file system. Scans of the slides from my 1976 trip to Iceland, for example, are in a directory named Iceland 1976, and the files are named Iceland 1976 001.jpg through Iceland 1976 146.jpg. The metadata in the image files indicate what's in the images and when the slides were taken, but they don't indicate that they were taken in Iceland or that they were from a single trip. That information is present only in the image file names and the fact that they share a common directory.

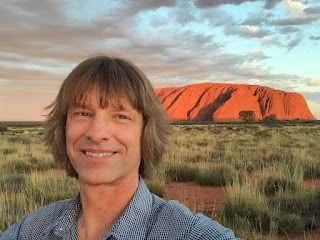

Going forward, I plan to include such overarching information about picture sets in each of the scans in the set. That will make the Description metadata in each image file longer and more cumbersome, but it will also make each image file self-contained. As things stand now, if a copy of

Iceland 1976 095.jpg (shown) somehow got renamed and removed from the other files in the set (e.g., because it was

sent to someone using iMessage), there would be no way to extract from the file that this picture was taken in Iceland and was part of the set of slides I took during that trip. My updated approach will rectify that.

Putting All Metadata into Image Files

The slides I had scanned were up to 70 years old. Over the decades, slides got moved from one collection to another. Some got mis-labeled or mis-filed. As I was reviewing the scans, I often found myself wondering whether a picture I was looking at was related to other pictures I'd had scanned. My parents may have made multiple trips to Crater Lake or Yosemite National Parks in the 1950s and 1960s, for example, but I'm not sure, because there's not enough descriptive information on the slides (or the boxes they were in) to know.

More than once I wished I could find a way to reconstitute the set of slides that came from a particular roll of film. I think this is often possible. In addition to hints in the images themselves (e.g., what people are wearing, what's in the background, etc.), slide frames are made of different materials and often include slide numbers, the film type, and the name of the processing lab. Development date information, if present, is either printed or debossed, and, when printed, the ink has a particular color (typically black or red). All these things can be used to determine whether two potentially related slides are likely to have come from the same roll of film.

I briefly went down the road of creating a spreadsheet summarizing these factors for various sets of slides, but it's a gargantuan job. It didn't take long for me to stop, slap myself, and reiterate that I was working on family photos, not cataloging historic imagery for scholarly research. Nevertheless, I think it would be nice to have more metadata about slide frames in the image files. Whether Chris (or anybody else) would agree to enter such information as part of the scanning process, I don't know.

Dealing with Google Photos

Words cannot describe how useful I find Google Photos' search capability. Despite the effort I've invested adding descriptive metadata to scanned image files and the time I've taken to help Google Photos (GP) identify faces of family and friends, it's not uncommon for me to search for images with visual characteristics that are neither faces nor described in the metadata, e.g., "green house" or "wall sconce". Such searches turn up what I'm looking for remarkably often. The alternative--browsing my library's tens of thousands of photos--is impractical. This makes Google Photos indispensable. That has some implications.

When Scan-Slides started delivering image files, I plopped them into a directory on my Windows machine where GP automatically uploads new pictures. I then went about the task of reviewing and, in some cases, revising the metadata in the scans. In many cases, this involved adjusting the "when taken" metadata, either because the information I'd given Scan-Slides was incorrect (e.g., mis-labeled slide trays) or because Scan-Slides had made an error when entering the data. I also revised Description information to make it more comprehensive (e.g., adding names of people who hadn't been mentioned on the slide frames) or to impose consistency in wordings, etc. The work was iterative, and I often used batch tools to edit many files at once.

Unbeknownst to me, GP uploaded each revised version of each image file I saved. And why not? Two image files differing only in metadata are different image files! By the time I realized what was happening, GP had dutifully uploaded as many as a half dozen versions of my scans. I wanted only the most recent version in each set of replicates, but Google offers virtually no tools for identifying identical images with different metadata. The fact that I'd often changed the "when taken" metadata during my revisions and that GP always sorts photo libraries chronologically meant that different versions of the same image were often nowhere near one another.

The lesson, to borrow a term from Buffy the Vampire Slayer, is that iteratively revising image file metadata and having Google Photos automatically upload new image files are un-mixy things.

I told GP to stop monitoring the directory where I put my scans, spent great chunks of time eradicating the thousands of scan files GP had uploaded, and resolved to manually upload my scans only after I was sure the metadata they contained was stable. A side-effect was that I could no longer rely on GP acting as an automatic online backup mechanism for my image files, but since I have a separate cloud-based backup system in place, that didn't concern me.

Multiple-Sided Objects

Scannable objects have two sides: the front and the back. For photographs, it often makes sense to scan both sides, because information about a photo is commonly written on the back. (If scans of slides included not just the film, but also the slide frames, it would make sense to scan both sides, thus providing a digital record of slide numbers, film types, and processing labs, etc., that I mentioned above.)

Scanning both sides of a two-sided object (e.g., the front and back of a photograph) yields two image files. That's a problem. Separate image files can get, well, separated, in which case you could find yourself with a scan of only one side of an object. Preventing this requires finding a way to merge multiple images together.

I say multiple images, because there might be more than two. Consider a letter consisting of two pieces of paper, each of which has writing on both sides. A scan of the complete letter requires four images: one for each side of each piece of paper. If the letter has an envelope, and if both sides of it are also scanned, the single letter object (i.e., the letter plus its envelope) would yield six different images.

Some file formats support storing more than one "page" (i.e., image) in a file. TIFF is probably the most common. Unfortunately, TIFFs, even when compressed, are much larger than the corresponding JPGs--about three times larger, in my experiments. More importantly, TIFF isn't among the file formats supported by Google Photos. When multi-page TIFFs are uploaded to GP, GP displays only the first page. For me, that's a deal breaker.

It's natural to consider PDF, but PDF isn't an image file format, so it doesn't offer image file metadata fields. In addition, PDF isn't supported by GP. Attempts to upload PDFs to Google Photos fail.

My approach to the multiple-images-in-one-file problem is to address only double-sided objects (e.g., photographs, postcards, etc.). I handle them by digitally putting the front and back scans side by side and saving the result as a new image file (as at right). A command-line program,

ImageMagick, makes this easy. The metadata for the new file is copied from the first of the files that are appended, i.e., from the image for the front of the photograph.

I haven't yet had to deal with objects that require more than two scans, e.g., the hypothetical four-page letter and its envelope. My current thought is that the proper treatment for those is to ignore Google Photos and just use PDF. I'm guessing that most such objects will be largely textual (as would typically be the case for letters), in which case OCR and text searches will be more important than image file metadata and image searches.